I’ve been in healthcare IT for a long time, so I am not shocked when I notice that I’m the oldest person in the room during a work meeting. When I was starting out as a physician informaticist, there was exactly one of me for a multi-hundred physician medical group. Our institution wasn’t terribly interested in paying physicians to be involved in technology projects, since leadership tied physician value solely to their ability to generate revenue.

It took more than a decade, and the insistence of a certain EHR vendor during an impending EHR conversion, for the organization to become willing to invest in clinical informatics.

Times have changed, and I am interacting with more members of Generation Z. As digital natives, they have always been connected with technology. This has created greater interest in clinical systems as they enter medical school, particularly for those who have worked as scribes or in clinical roles as part of the application process.

They have built upon these skills during their coursework. They have carried that interest into residency, often seeking opportunities to become EHR super-users, technology evaluators, or part of the technology build team.

Medical education during the last decade, at least at my alma mater, has recognized that learning styles vary. Different course materials are provided, including libraries of lecture videos that have eliminated the need for some students to attend class. Students are more focused on board scores, and many of them work with supplemental board preparation resources while consuming the lecture content at 2x speed.

I wanted to learn more about how this generation approaches learning. One of my favorite teachers recommended that I read “The Anxious Generation” by Jonathan Haidt.

The book summarizes the factors that influence differences across generations, including shared experiences. I immediately thought of the Vietnam War, the Cold War, and the Space Race, which I had heard about during childhood.

The book also notes that generations are defined by the technologies that they use in childhood, from radio, to television, and then from personal computers to the internet and smartphones.

Generation Z is the first to have their adolescence fully chronicled online, with the book noting that they are “the test subjects for a radical new way of growing up, far from the real-world interactions of small communities in which humans evolved. Call it the Great Rewiring of Childhood.”

The book also talks about the transition from a play-based childhood to a device-based childhood, including laptops, tablets, and smartphones. Communications have became disembodied and asynchronous, with increasing communication to a wide audience and within an online world where participants can just leave when they want without necessarily resolving conflicts.

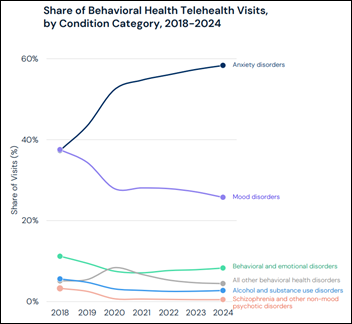

It chronicles the rise of adolescent mental health issues, such as depression and anxiety, that parallel the rise in technology use. It covers the decline of what it calls “risky play” among children, and how that shifts young people from being in “discovery” mode to “defensive” mode. It discusses the idea of a psychological immune system and how humans need challenges to learn how to handle adverse events in a healthy way.

As a proud member of Generation X, one of my favorite parts of the book included pictures of playground equipment that would never be allowed today, each of which was present at my own elementary school.

The book talks about how smartphones and digital tools are often so interesting to users that they lose interest in non-screen-based activities. Anyone who has ever watched a friend or partner vegetate online, rather than participating in a conversation with the person in front of them, is familiar with this phenomenon.

It made me think about whether people who consume material online or through multimedia channels learn with the same level of depth as those who use traditional methods, such as reading or attending a lecture. I certainly wonder about that when I’m working with students who are using AI-based tools to look up clinical information, because anecdotally it feels like retention might be less than what I’m used to seeing with students I precept.

After reading about the decline in risk-taking behaviors among young people, I remembered one of the conversations that I recently had with some adjunct clinical faculty. The discussion was about bedside teaching rounds in the hospital, where traditionally a group of students, interns, and residents works with an attending physician to review cases of admitted patients. Questions are directed to students and trainees, which can be uncomfortable if you haven’t read up on the cases.

Debate followed about whether students should be allowed to consult phones during rounds, or if instead they should have to answer based solely on their knowledge and recall ability.

One colleague noted that students are more likely to answer questions if they are allowed to look up information, which they felt should be allowed because it parallels what senior physicians do when they don’t know the answer. There was back-and-forth about people who don’t look things up because they think that they know everything, which therefore makes them unsafe.

It was one of the more spirited discussions that I’ve been in this year. In hindsight, I wonder if students who don’t raise their hands aren’t lacking knowledge, but rather are avoiding the risk of being wrong.

The book also contains a deep discussion of the “foundational harms” that were caused by this Great Rewiring of Childhood, including social deprivation, sleep deprivation, attention fragmentation, and addiction. I’ve seen these among my pediatric patients. After reading the book, I am more likely to recognize signs of these in colleagues and coworkers, even among the older members of the team.

I’m curious how these elements could also be contributing to clinician burnout, especially since technology is deeply embedded in every aspect of patient care. Unless they’re in a strong call group where they can trust signing out to a colleague, and unless they also have the fortitude to avoid checking on patients when they’re not on call, it’s hard to get away from your phone.

The book closes with recommendations on what schools and governments can do to help counter the effects of increasing device use. These include limiting access to phones in schools, which is already happening in a number of states. The author also calls for governments and tech companies to address the issue by raising the age for teen internet use from 13 to 16 and for parents to be alert to signs of problematic use.

The book isn’t an easy read. The notes, footnotes, and index are over 80 pages. I would love to see a digest version that targets parents who are raising young children, although I’m not sure how well it would resonate given their own communal level of technology immersion.

What do you think about the idea of a Great Rewiring? Are you seeing examples of these phenomena in your workplace and in your families?. If you read the book, would you recommend it? What was your favorite takeaway? Do you recommend other books on the topic? Leave a comment or email me.

Email Dr. Jayne.

Great, thanks for getting "we are, we are, oh hoh, oh hoh" stuck in my head again: https://www.youtube.com/watch?v=K5LiUrezV6k